Improving Deep Neural Learning Networks (Part 3): Hyperparameter Tuning and Batch Normalization

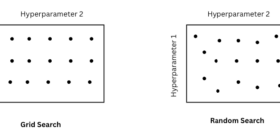

“Getting the algorithm right is half the battle, knowing how to tune it, normalize it, and deploy it is what separates research code from production systems. 1. Hyperparameter Tuning 1.1. Tuning Process Not all hyperparameters are equally important. The common priority ranking is: Most important: Learning Rate α 2nd priority: Momentum β, mini-batch size, number of hidden units 3rd priority: Number of layers, learning rate decay 4th priority (rarely tuned): Adam’s β₁=0.9, β₂=0.999, ε=1e-8 Hyperparameter Search Methods: Source: image by author Grid Search: try every […]

![[P] I built a personal research newspaper to funnel arXiv [P] I built a personal research newspaper to funnel arXiv](https://www.digitado.com.br/wp-content/uploads/2026/03/j1ow1ag1kdsg1-Pasn66-280x140.png)