Building Composable Safety and Performance Layers for Agents in Rust

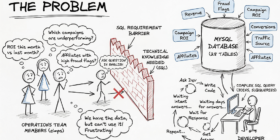

As AI agents move from prototypes to production systems, a recurring challenge has emerged: how do you enforce safety, optimize performance, and handle sensitive data consistently across every inference call — without scattering that logic throughout your agent code? This is the problem we set out to solve with AutoAgents, an open-source AI agent framework written in Rust. Our latest feature, LLM Pipelines, introduces composable middleware layers for LLM inference — an approach inspired by how the web […]