KV Caching in LLMs: A Guide for Developers

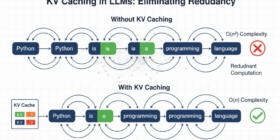

Language models generate text one token at a time, reprocessing the entire sequence at each step.

Language models generate text one token at a time, reprocessing the entire sequence at each step.

Deep state-space models (DSSMs) enable temporal predictions by learning the underlying dynamics of observed sequence data. They are often trained by maximising the evidence lower bound. However, as we show, this does not ensure the model actually learns the underlying dynamics. We therefore propose a constrained optimisation framework as a general approach for training DSSMs. Building upon this, we introduce the extended Kalman VAE (EKVAE), which combines amortised variational inference with classic Bayesian filtering/smoothing to model dynamics more […]

Cardiac blood flow patterns contain rich information about disease severity and clinical interventions, yet current imaging and computational methods fail to capture underlying relational structures of coherent flow features. We propose a physics-informed, latent relational framework to model cardiac vortices as interacting nodes in a graph. Our model combines a neural relational inference architecture with physics-inspired interaction energy and birth-death dynamics, yielding a latent graph sensitive to disease severity and intervention level. We first apply this to computational […]

The H100 gets all the FP8 attention. But Ampere, Turing, and Volta aren’t going anywhere. Feather emulates FP8 in software using custom Triton kernels with bit-packing, targeting memory bandwidth as the primary optimisation lever. RTX 3050 results: TinyLlama-1.1B: 1.5x over HF FP32 with minimal accuracy loss. Other Results are described in the Github Repo. Honestly though, the kernels are still pretty naive. There’s a long way to go: CUDA Graph optimisation Block-level quantisation Llama-2/3 family support, TinyLlama was […]

Scientists have long warned that a warming world is likely to hasten the spread of infectious diseases, making vaccination even more critical to safeguard public health. And though most scientists hail vaccines as one of public health’s greatest achievements, they have provoked fear, distrust, and contentious resistance since Edward Jenner invented the first vaccine, to prevent smallpox, in the late 1700s. Yet, until now, the United States never installed an outspoken vaccine critic like Robert F. Kennedy Jr. […]

Hey r/machinelearning, Posted about Perpetual at v1.1.2 – here’s an update. For those who missed it: it’s a gradient boosting machine in Rust where you replace hyperparameter tuning with a single budget parameter. Set it, call .fit(), done. python model = PerpetualBooster(objective=”SquaredLoss”, budget=1.0) model.fit(X, y) Since then the Rust core basically doubled (~16.5k lines added). Here’s what’s new: Causal ML – full suite built into the same Rust core: Double Machine Learning, meta-learners (S/T/X), uplift (R-learner), instrumental variables, […]

An arXiv paper, a Django co-creator, and Google’s Addy Osmani agree: most developers are using coding agents wrong. Continue reading on Towards AI »

Much of the Subaru Uncharted makes very little sense. The “new” EV clearly resembles the Solterra, upon which Toyota and Subaru jointly developed the Uncharted and the bZ Woodland as a continuation of a partnership that stretches back to 2012 with the FR-S/BRZ/86. This time, a fifth sibling joins the platform: the Subaru Trailseeker, which arrives simultaneously with slightly more power, capability, and a larger rear canopy (but you have to wait until March 2 to read more […]

Trace is launching with $3 million in seed funding, including investment from Y Combinator, Zeno Ventures, Transpose Platform Management, Goodwater Capital, Formosa Capital, and WeFunder.

Figma is integrating OpenAI’s coding assistant Codex a week after it announced a similar integration with Anthropic’s Claude Code.