A better training method for reinforcement learning with human feedback

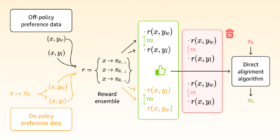

A better training method for reinforcement learning with human feedback Contrasting training pairs with large reward differences mitigate spurious correlations and improve performance of direct-alignment algorithms by as much as 20%40%. Machine learning Sailik Sengupta Saket Dingliwal May 02, 09:00 AM May 13, 02:56 PM Reinforcement learning with human feedback (RLHF) is the standard method for aligning large language models (LLMs) with human preferences such as the preferences for nontoxic language and factually accurate responses. Recently, one of […]