Can implicit regularization in deep learning be explained by norms?

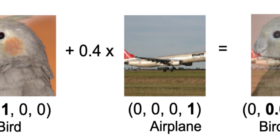

This post is based on my recent paper with Noam Razin (to appear at NeurIPS 2020), studying the question of whether norms can explain implicit regularization in deep learning. TL;DR: we argue they cannot. Implicit regularization = norm minimization? Understanding the implicit regularization induced by gradient-based optimization is possibly the biggest challenge facing theoretical deep learning these days. In classical machine learning we typically regularize via norms, so it seems only natural to hope that in deep learning […]